Deadlock Avoidance

I am Jyotiprakash, a deeply driven computer systems engineer, software developer, teacher, and philosopher. With a decade of professional experience, I have contributed to various cutting-edge software products in network security, mobile apps, and healthcare software at renowned companies like Oracle, Yahoo, and Epic. My academic journey has taken me to prestigious institutions such as the University of Wisconsin-Madison and BITS Pilani in India, where I consistently ranked among the top of my class.

At my core, I am a computer enthusiast with a profound interest in understanding the intricacies of computer programming. My skills are not limited to application programming in Java; I have also delved deeply into computer hardware, learning about various architectures, low-level assembly programming, Linux kernel implementation, and writing device drivers. The contributions of Linus Torvalds, Ken Thompson, and Dennis Ritchie—who revolutionized the computer industry—inspire me. I believe that real contributions to computer science are made by mastering all levels of abstraction and understanding systems inside out.

In addition to my professional pursuits, I am passionate about teaching and sharing knowledge. I have spent two years as a teaching assistant at UW Madison, where I taught complex concepts in operating systems, computer graphics, and data structures to both graduate and undergraduate students. Currently, I am an assistant professor at KIIT, Bhubaneswar, where I continue to teach computer science to undergraduate and graduate students. I am also working on writing a few free books on systems programming, as I believe in freely sharing knowledge to empower others.

Deadlock avoidance is a strategy that operates by ensuring the system always remains in a state where deadlocks are theoretically impossible, as opposed to deadlock prevention methods which restrict how processes request resources to eliminate at least one of the necessary conditions for a deadlock. Deadlock avoidance does this by requiring additional information upfront about the maximum resource needs of each process and then making careful decisions about resource allocation based on this information.

Concept of Safe State

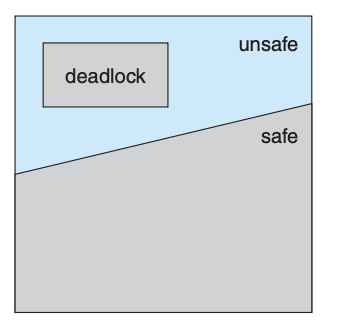

A crucial concept in deadlock avoidance is the idea of a "safe state." A state is considered safe if the system can allocate resources to every process in some sequence without leading to a deadlock. This means that even if a process's immediate resource needs cannot be fully satisfied, there's still a way to order the resource allocation such that all processes can complete their tasks by waiting for others to release their resources first.

In a safe state, there exists a "safe sequence" of processes where, for each process in the sequence, its remaining resource requests can be satisfied with the currently available resources, plus those held by all previously finished processes in the sequence. If a process needs to wait because its resources aren't immediately available, it can do so knowing that it will eventually proceed once the necessary resources are freed by the earlier processes.

Moving Between States

It's possible for a system to transition from a safe state to an unsafe state, where an unsafe state is one that might lead to a deadlock. This transition can occur if the system allocates resources without ensuring the remaining state is safe. An unsafe state isn't necessarily a deadlock but is a scenario where the risk of falling into a deadlock exists because the system might not be able to fulfill all future resource requests.

Example Scenario

Consider a system with three processes and twelve tape drives, where each process has declared its maximum tape drive needs. The system can ensure it remains in a safe state by only fulfilling resource requests that continue to guarantee the existence of a safe sequence. If a process's request would transition the system to an unsafe state, the request is deferred until it's safe to proceed.

Avoidance Algorithms

Deadlock avoidance algorithms use this principle of staying within a safe state to dynamically check whether a current resource request can be satisfied without leading to a potential deadlock. If granting a request would leave the system in an unsafe state, the request is not immediately granted, even if the resources are currently available. This cautious approach can lead to lower resource utilization since resources might be left idle that could have been allocated without actually causing a deadlock. However, it effectively avoids deadlocks by ensuring that any sequence of process completions is possible without getting stuck.

Deadlock avoidance works by intelligently granting or deferring resource requests based on a detailed understanding of each process's needs and the system's current and potential future states. This approach requires more initial information and computational overhead than simple deadlock prevention but can result in more flexible and efficient resource allocation while still avoiding deadlocks.

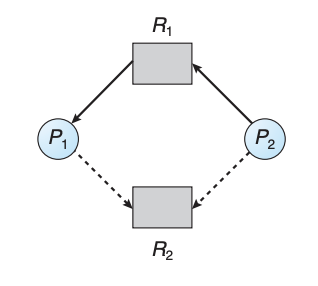

In systems where each resource type only has one instance, deadlock avoidance can be enhanced by introducing a concept known as a "claim edge" to the resource-allocation graph initially described for deadlock detection. A claim edge, depicted as a dashed line from a process (Pi) to a resource (Rj), signifies that Pi might request Rj in the future. This edge is directional, similar to request and assignment edges, but is visually distinct to indicate a potential future request rather than an immediate one.

Before a process starts, it must declare all its potential resource requests through claim edges in the graph. This requirement ensures the system is aware of all possible future requests, although it's possible to add new claim edges later as long as they are the only type of edges associated with that process at the time of addition.

When a process actually requests a resource it previously claimed, the corresponding claim edge is transformed into a request edge. If the process's request is granted and the resource is allocated, this request edge then becomes an assignment edge. Conversely, when a resource is released, the assignment edge reverts to a claim edge, indicating the resource may be requested again in the future.

The key to using this graph for deadlock avoidance lies in ensuring that converting a claim edge to an assignment edge (indicating resource allocation) does not create a cycle in the graph. The presence of a cycle would indicate an unsafe state, potentially leading to deadlock, as it would mean the processes involved are waiting on each other in a circular manner. To check for the safe state, a cycle-detection algorithm is employed whenever a resource request is made, requiring computational effort proportional to the square of the number of processes in the system.

If no cycle is detected when a process requests a resource, the resource can be safely allocated, keeping the system in a safe state. However, if a cycle would be formed by allocating the resource, the system would enter an unsafe state, and the requesting process must wait, avoiding the potential deadlock.

For example, consider a scenario in which P2 requests resource R2. Even if R2 is not currently being used, allocating it to P2 could create a cycle in the resource-allocation graph. This would indicate an unsafe state, showing that the system is at risk of deadlock if P1 and P2 proceed to request each other's resources, hence the request by P2 for R2 cannot be granted immediately to prevent this potential deadlock.